The Nine Planets is an encyclopedic overview with facts and information about mythology and current scientific knowledge of the planets, moons, and other objects in our solar system and beyond.

The 9 Planets in Our Solar System

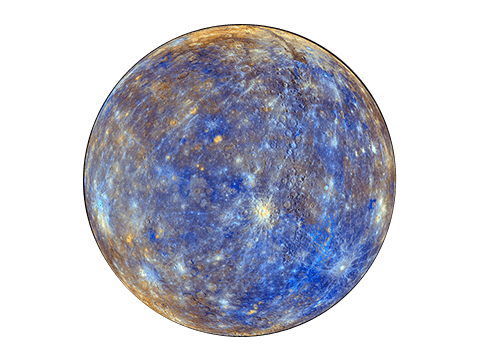

Mercury

The smallest and fastest planet, Mercury is the closest planet to the Sun and whips around it every 88 Earth days.

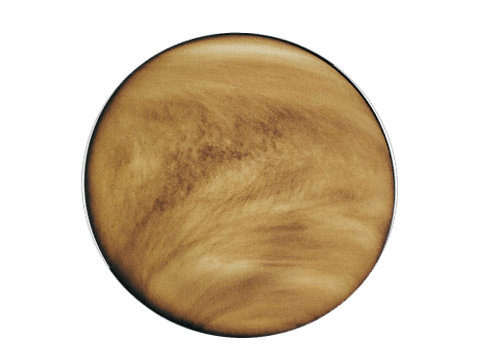

Venus

Spinning in the opposite direction to most planets, Venus is the hottest planet, and one of the brightest objects in the sky.

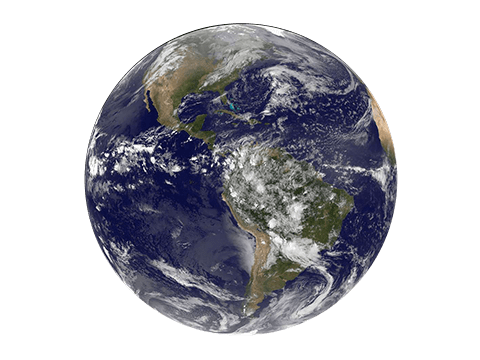

Earth

The place we call home, Earth is the third rock from the sun and the only planet with known life on it - and lots of it too!

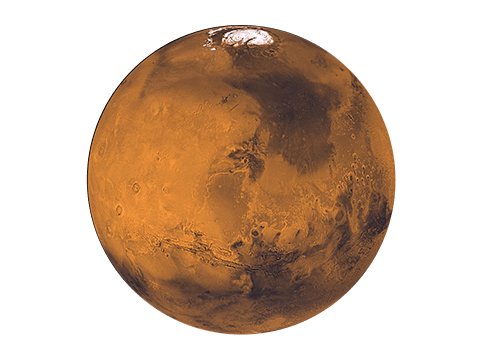

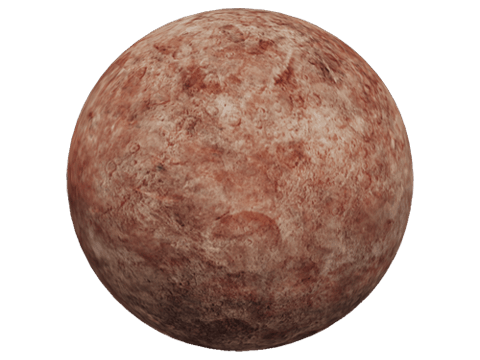

Mars

The red planet is dusty, cold world with a thin atmosphere and is home to four NASA robots.

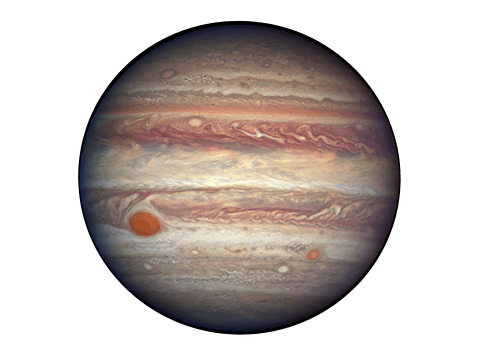

Jupiter

Jupiter is a massive planet, twice the size of all other planets combined, and has a centuries-old storm that is bigger than Earth.

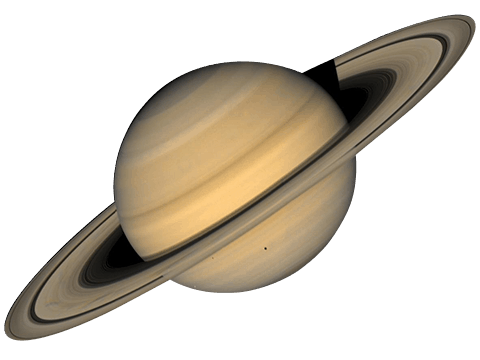

Saturn

The most recognizable planet with a system of icy rings, Saturn is a very unique and interesting planet.

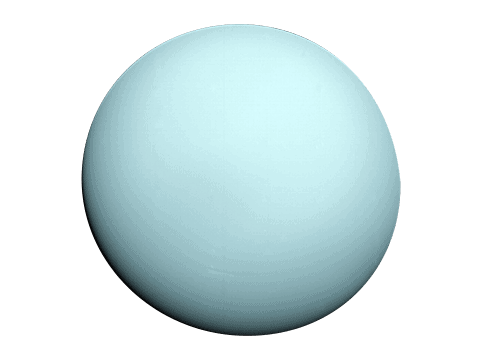

Uranus

Uranus has a very unique rotation--it spins on its side at an almost 90-degree angle, unlike other planets.

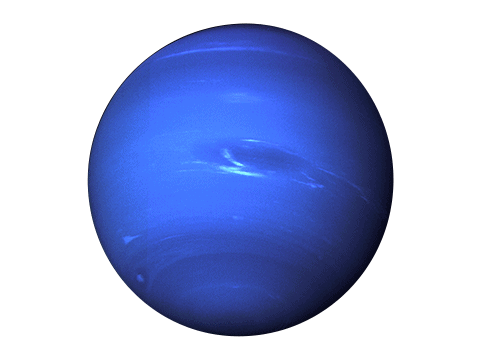

Neptune

Neptune is now the most distant planet and is a cold and dark world nearly 3 billion miles from the Sun.

The Five Dwarf Planets

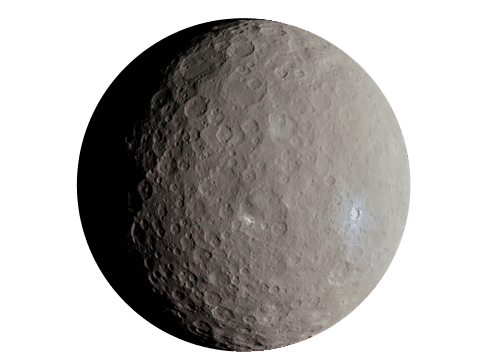

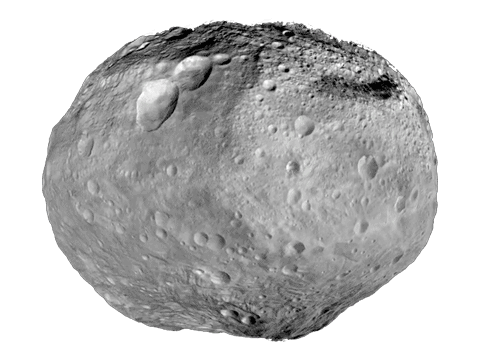

Ceres

Ceres is the largest object in the asteroid belt but was reclassified a dwarf planet in 2006 - even though it's 14 times smaller than Pluto.

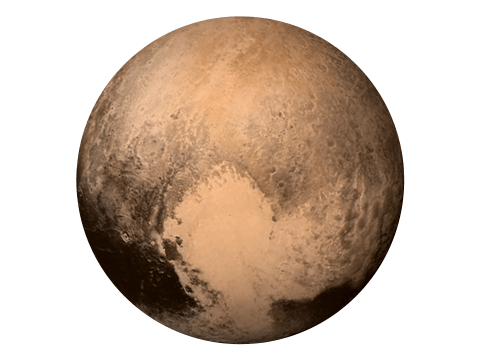

Pluto

Pluto will always be the ninth planet to us! Smaller than Earth's moon, Pluto was a planet up until 2006 and has five of its own moons!

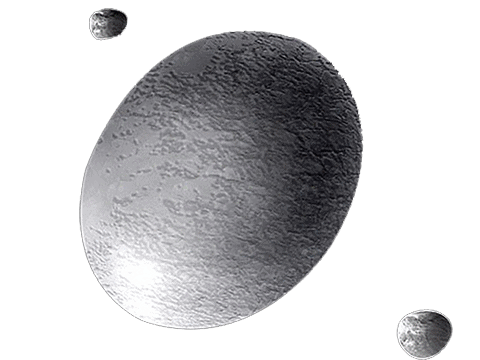

Haumea

Haumea lives in the Kuiper belt and is about the same size as Pluto. It spins very fast, which distorts its shape, making it look like a football.

Makemake

Also in the Kuiper belt, Makemake is the second brightest object in the belt, behind Pluto. Makemake (and Eris) are the reason Pluto is no longer a planet.

Eris

Eris is the same size as Pluto, but three times further from the Sun! It's so far away, we don't know much about this extremely cold and remote dwarf planet.

Other Objects in The Solar System

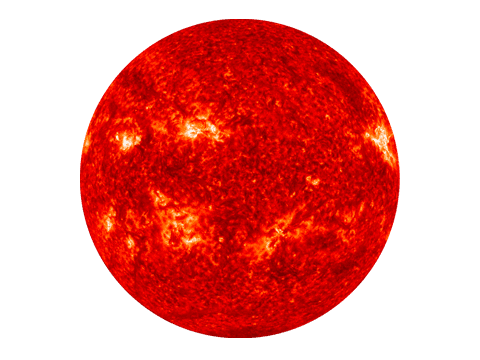

The Sun

The Sun is the heart of our solar system and its gravity is what keeps every planet and particle in orbit. This yellow dwarf star is just one of billions like it across the Milky Way galaxy.

The Moon

The only place beyond Earth that humans have explored, the Moon is the largest and brightest object in our sky - responsible for the tides and keeping Earth stable on its axis.

Comets

Comets are snowballs made up of frozen gas, rock, and dust that orbit the Sun. As they get closer to the Sun, they heat up and leave a trail of glowing dust and gases.

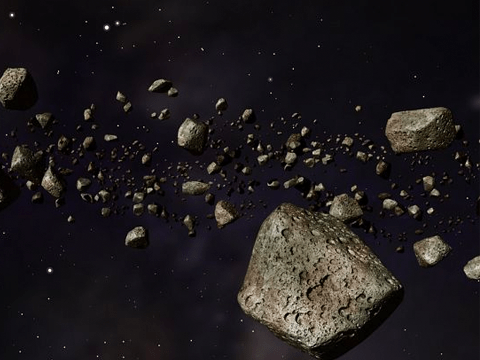

Asteroids

Asteroids are small, rocky, debris leftover from the formation of our solar system around 4.6 billion years ago. There are currently over 822,000 known asteroids.

Asteroid Belt

Between the orbits of Mars and Jupiter, the asteroid belt contains an estimated 1.9 asteroids. The total mass of all objects in the asteroid belt is still less than that of Earth's Moon.

Our Educational Sponsors

Earth Science course

Excel High School has an online high school Earth Science course. Learn more about the course details here.

Northgate Academy offers Earth science and astronomy as online homeschooling courses.

Washington Technical Institute is an accredited college that offers online paralegal program and more.